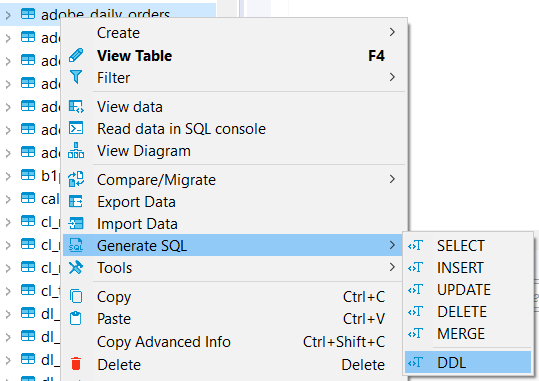

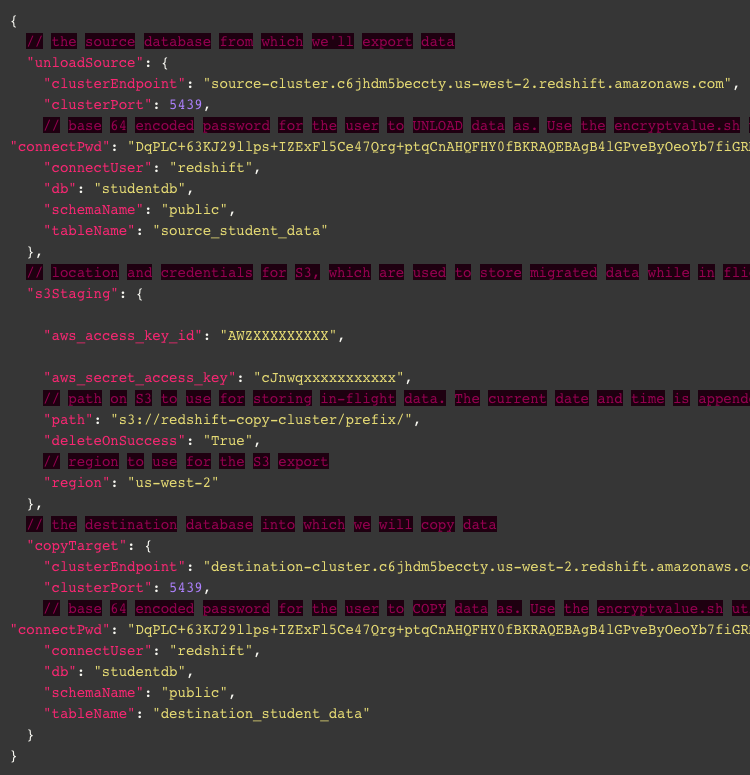

Once one such update broke mysql bin log and all of our replication tasks stopped working. This checkbox if checked, will upgrade database in the maintenance window and reboot the database. Be very careful about RDS’s Auto Minor Version Upgrade feature.Make sure that all replication tasks are completely stopped before you reboot the database. Be careful about the source database reboot.Here are some of the issues we faced in production: It’s not a big issue since MySQL Enums can be easily replaced by check constraints or foreign keys with lookup tables. DMS doesn’t support Enum fields in MySQL.Here is what they say in documentation – “ If you haven’t created primary or unique keys on the target tables, then AWS DMS must do a full table scan for each update, which can significantly impact performance”. Redshift doesn’t obey the primary keys and hence sometimes you may be tempted not to define them in the schema. If you don’t specify primary keys on the target tables (Redshift tables in our case), your replication latency may increase.Here are some of the implementation issues we faced: The motive behind this post is to share the knowledge we gained while implementing DMS at GumGum. You can learn more about varous DMS replication related concepts at DMS will watch the bin log for any changes and as soon as the changes appear in binlog, DMS will replicate it to the target tables. You need to enable ROW level binlog in MySQL and create the replications tasks. You can place one or more replication tasks on one replication instance. Creating replication tasks to replicate data from one table to another table was few clicks in the console. You can define source endpoints (MySQL) and target endpoint (Redshift). DMS has concept of replication tasks and replication instances. Once decided, the implementation was fairly easy. DMS also have a good API which makes it easy to use.

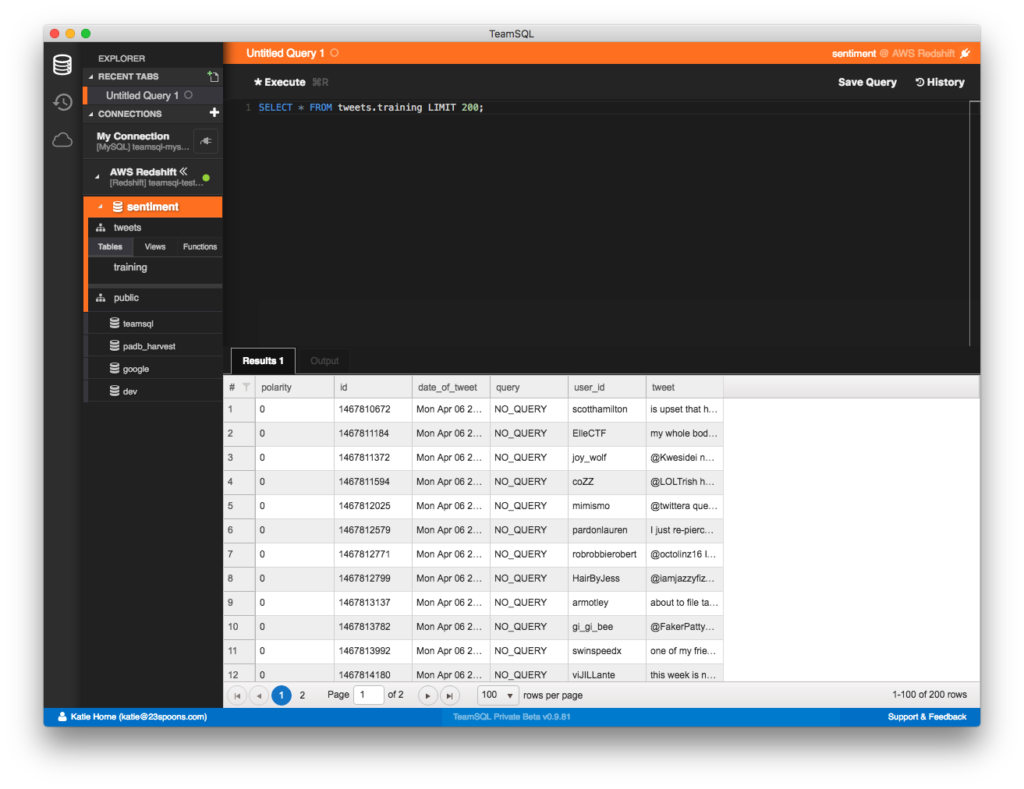

Furthermore it would require no approvals from anybody to use a one more AWS service. Our security conscious ops team didn’t like the idea of sending sensitive data to an outside company server and bringing back in again. When we learned that AWS offered a similar solution it was a no brainer. But we were always looking for a managed solution. There is even an open source solution – Tungsten. There are many options available in the market for such a replication – FiveTran, Alooma, Informatica. Our script was simply not capable of replicating changes in real time. Furthermore, some users were demanding that changes to some tables are reflected in the warehouse immediately. But we started opening offices worldwide (London and Sydney) and this wasn’t an option anymore. This would take down our reporting dashboard for 30 minutes every night. This script would simply read all the rows from a MySQL table, delete all the rows in Redshift table and insert all the rows in the Redshift table. In order to run the reports accurately it’s important that all the modifications done to the dimension tables in MySQL are transferred to Redshift in timely manner.īefore using DMS, we had a Groovy script that was transferring data from MySQL to Redshift every night. This database contains all of our fact tables. Our reporting dashboard connects with our data warehouse built on Redshift. This database contains all of our dimension tables.

The MySQL database is our primary OLTP database and many of our employees login to an internal application to modify data within this database. In our case, both of our datastores – RDS (MySQL) and Redshift were already in the cloud. But the service could be very useful for replicating data between the two datastores within the cloud as well. AWS’s Database Migration Service (DMS) is often misunderstood as a service that can only migrate your data to the cloud.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed